Small Devs

Blind Link

A short Unity 2D game, made over a week-end where I had been watching too much of Brackeys tutorials for my own good... Music made using the simple yet excellent Bosca Ceoil. Want to give it a try online?

Blind Link game-play demo

ElectroLab

Simple synthesiser created for a course on additive and subtractive synthesis. Based on the Web Audio API, deployed using Heroku.

Blender Cycles workout

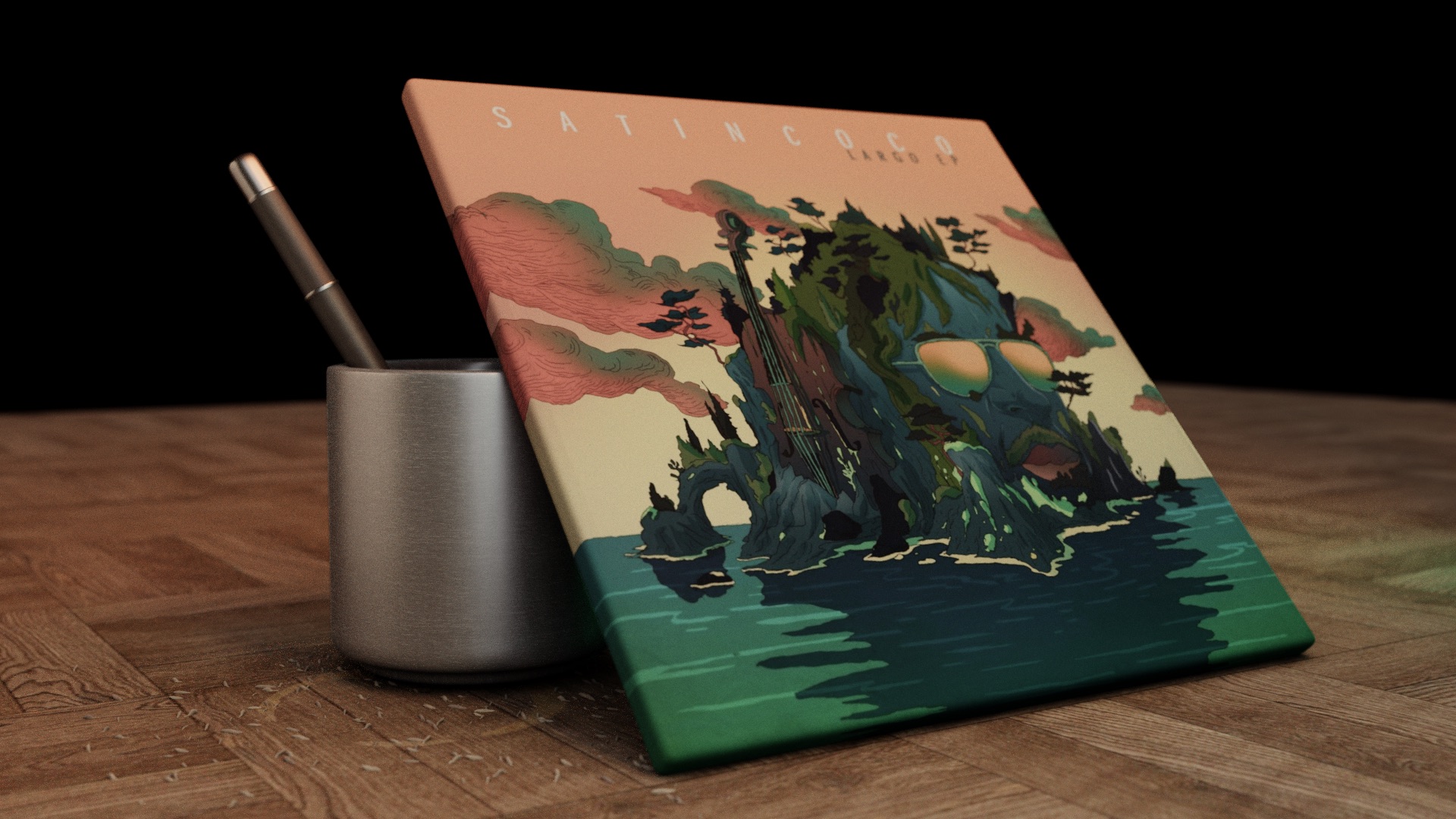

Blender based cover of the largo EP from Satin Coco

JSAmbisonic

A Web Audio library for Ambisonic encoding / decoding on the web. My thanks to Archontis for lightening the pain of javascript devs with his efficiency and cheerfulness.

JSAmbisonic Github (live demo there)

Point Cloud in the Blender Game Engine

Point-cloud recording based on Kinect2 (based on libfreenect2). Real-time rendering in the blender game engine based on a glsl shader by Dalai Felinto (github), part of the Echo Project, devs directed by Brian F.G. Katz at LIMSI-CNRS. Original music, courtesy of Jeremie Poirier-Quinot (Youtube channel).

Point-cloud projection: tryout

Still based on point-cloud recording, but with a calibrated room acoustic model. See it as a VR theatre, where one can assist to a given show in different theatre rooms (modifying both visual / acoustic rendering in real-time). (project website).

Point-cloud projection: speech on a theatre stage

The Blob

This mini-game was born from an evening of idleness at home with my brother. Me finalizing a Blender project, him working on a music piece. After a coffee break we decided to dedicate the remaining hours of the day to what became “The Blob” (ok, some would say it does not deserve a name at that stage). More music @ Satin Coco’s Sound Cloud.

The Blob game-play demo

RTS state machine

Playing with state machine and scriptable object implementation in Unity, following an excellent tutorial by Jason Weimann to create a minimalist RTS game architecture.

Blender Cycle render of Minecraft map export

Just because it looks nice and brings up memories of so many adventures.